Deepfake technology raises serious ethical concerns because it can be used without consent to create realistic images and videos that manipulate reality. You should be aware of how it threatens personal privacy and spreads misinformation, making it hard to trust media. These issues require us to question the authenticity of online content and develop better detection tools. If you’re interested, you’ll discover more about the challenges and efforts to address these ethical dilemmas.

Key Takeaways

- Deepfake technology raises significant ethical concerns regarding consent, privacy, and the misuse of personal likenesses without permission.

- It poses risks of spreading misinformation and false content, undermining trust in media and challenging verification processes.

- Creating deepfakes without consent infringes on individual autonomy and can cause psychological harm or reputational damage.

- Developing detection tools and legal frameworks is essential to address ethical issues and hold malicious creators accountable.

- Promoting responsible innovation and digital literacy helps mitigate ethical risks and fosters an ethical digital environment.

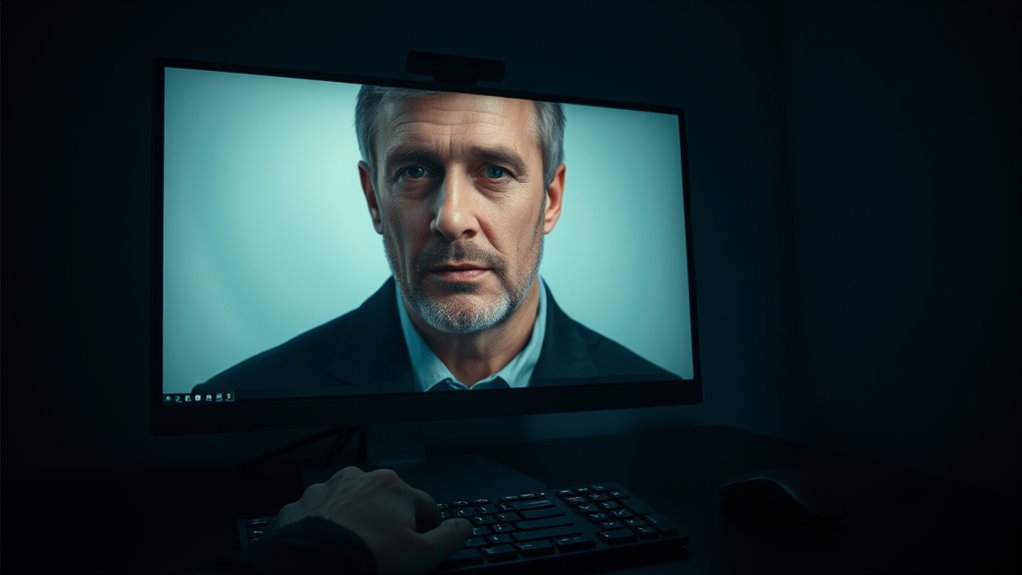

Have you ever wondered about the ethical implications of deepfake technology? As you explore this rapidly advancing field, you’ll quickly realize that it raises serious concerns related to consent dilemmas and misinformation risks. Deepfakes, which use artificial intelligence to create hyper-realistic videos or images, can manipulate reality in ways that were previously unimaginable. This power to convincingly alter appearances or voices puts a spotlight on the question: who really owns their likeness? When deepfakes are produced without someone’s permission, it becomes a clear violation of consent. Imagine seeing a video of a public figure saying something controversial, but in reality, they never made that statement. Such scenarios highlight how consent dilemmas threaten personal privacy and autonomy. It’s not just about celebrities; ordinary individuals can also be targeted, with their faces or voices used in malicious ways without their knowledge or approval. This creates a chilling effect, where people might feel increasingly hesitant to participate in digital spaces out of fear of misuse.

Alongside the consent dilemma, misinformation risks are perhaps even more alarming. Deepfakes have the potential to spread false information rapidly, undermining trust in media and institutions. You might come across a convincing video that seems to show a politician making a controversial speech or a celebrity endorsing a product, but in fact, it’s entirely fabricated. The danger lies in the fact that these videos are often indistinguishable from real footage, making it difficult for the average viewer to discern truth from fiction. When such videos circulate widely, they can sow discord, influence elections, or incite violence, all based on falsehoods. The speed and ease with which deepfakes can be produced amplify these risks, making it a challenge to combat misinformation effectively. Additionally, the rise of authenticity and trust as critical values underscores the importance of developing detection and verification tools.

Addressing these ethical issues requires a nuanced approach. You need to be vigilant, questioning the authenticity of what you see online and supporting efforts to develop detection tools. It also calls for legal frameworks that protect individuals’ rights and hold creators accountable for malicious use. Ultimately, the ethical use of deepfake technology hinges on respecting consent and actively working to minimize the misinformation risks. As you navigate a media landscape increasingly saturated with deepfakes, understanding these dilemmas helps you critically evaluate content and advocate for responsible innovation. Recognizing the profound impact of deepfakes on privacy, truth, and trust is essential for fostering an ethical digital environment where technology serves society, not harms it.

Frequently Asked Questions

How Can Individuals Detect Deepfakes Effectively?

To detect deepfakes effectively, you should improve your digital literacy by staying informed about common deepfake signs, like unnatural blinking or inconsistent facial features. Always verify sources before trusting videos, especially if something seems suspicious. Use fact-checking tools and reverse image searches to confirm authenticity. Being cautious and questioning suspicious content helps you identify deepfakes and avoid spreading misinformation.

What Legal Actions Are Available Against Deepfake Creators?

Imagine deepfake creators as artists painting false portraits; the law steps in as your vigilant gallery owner. You can pursue legal liability against them for defamation, fraud, or invasion of privacy. Additionally, if deepfakes infringe on intellectual property rights, you can seek copyright or trademark enforcement. These legal actions serve as shields, protecting your reputation and rights from malicious manipulation and unauthorized use of your content.

Are There Positive Uses for Deepfake Technology?

Yes, deepfake technology has positive uses, such as entertainment applications like movies and virtual reality experiences. It also fosters educational innovations by creating realistic historical reenactments or interactive learning tools. You can see deepfakes helping bring stories to life or making complex concepts easier to understand. When used responsibly, this technology can enhance creativity and learning, transforming how you experience media and education.

How Does Deepfake Technology Impact Privacy Rights?

Deepfake technology impacts your privacy rights by enabling data manipulation and raising consent issues. You might find your likeness used without permission, which threatens your control over personal images. This technology can create false representations, making it hard to trust digital content. You need to stay vigilant and advocate for strong regulations to protect your privacy, ensuring your consent is respected and your data isn’t exploited without your knowledge.

What Are Future Technological Safeguards Against Malicious Deepfakes?

You can rely on future safeguards like automated detection systems and blockchain verification to combat malicious deepfakes. Automated detection quickly identifies manipulated content, while blockchain verification guarantees content authenticity through secure, tamper-proof records. Although no system is perfect, combining these technologies makes it harder for bad actors to spread false information. Embracing these innovations helps protect truth, even if skeptics worry about technology’s limitations.

Conclusion

As you consider the ethics of deepfake technology, remember that nearly 80% of people can’t reliably tell real videos from fake ones. This highlights the urgent need for responsible use and regulation. You have the power to advocate for transparency and ethical standards, helping prevent misuse like misinformation or harm. By staying informed and cautious, you can play a part in shaping a future where technology serves society ethically and safely.